Satellite Operations Trace System Coming Soon

Satellite operations do not fail only when a command is wrong. They also fail when nobody can cleanly explain where an action came from, who approved it, what system executed it, whether it should have been possible in the first place, and what the surrounding context looked like when it happened. In complex operational environments, that kind of ambiguity is operational drag. It slows response, weakens confidence, complicates review, and turns otherwise manageable events into long and expensive acts of reconstruction. The deeper the environment, the more tools, operators, scripts, interfaces, service accounts, approvals, and machine-driven actions accumulate around it. At some point the problem stops being whether a log line exists and becomes whether the organization can defend its own history under pressure.

Satellite Operations Trace System, or SOTS, exists to reduce that ambiguity. It is a controlled execution and traceability layer for satellite and ground operations environments that need actions to be attributable, reviewable, and defensible. SOTS is designed to sit between intent and execution, recording who initiated an action, under what authority, through what interface, against which target, with what policy decision, and with what observable result. It is not a replacement for mission systems, telemetry systems, or satellite control applications. It is the operational trace spine around them. Its purpose is straightforward: make actions easier to govern, easier to audit, easier to reconstruct, and much harder to lose in the fog of modern operational tooling.

The Problem SOTS Is Built To Solve

Modern satellite operations rarely exist inside one clean system, they live across consoles, orchestration layers, service endpoints, scripts, mission tools, ticketing workflows, operator handoffs, maintenance windows, test procedures, and emergency actions taken under imperfect information. Even in disciplined environments, the actual path from decision to execution can become fragmented. One tool initiates an action. Another system authenticates it. A third system executes something adjacent to it. Logs end up in different places. Approval may live in email, chat, a runbook, or an entirely separate workflow. Later, when someone needs to understand what happened, the organization is left comparing timestamps across disconnected records and hoping the story still fits together.

That arrangement creates a familiar set of problems: teams struggle to determine who actually initiated a change, it becomes difficult to separate an approved action from an unauthorized one that merely looks similar in the logs. Anomalies take longer to investigate because the surrounding operational context is scattered. Post-event review turns into archaeology. Leadership asks simple questions and receives complicated, conditional answers. Compliance becomes a matter of stitching together partial evidence. Operators waste time proving what they already know happened. Engineers waste time trying to infer causality from systems that were never designed to preserve it cleanly.

SOTS is built for that gap. It does not assume the surrounding environment is simple, elegant, or new. It assumes the opposite. It assumes that operators, automation, and mission software all participate in actions that later need to be attributed, reviewed, replayed, and defended. It assumes organizations need a better answer than “the logs are probably in there somewhere.”

Why Ordinary Logs Are Not Enough

Most operational environments already produce logs, sometimes in alarming quantity. That is not the same as producing a defensible action history. A log can show that an event occurred. It can show that a service responded. It may show an API call, a command outcome, a status transition, or a system message. What it usually does not provide, at least not by itself, is a coherent operational chain that answers the questions decision makers actually care about. Who requested the action. Who or what was allowed to perform it. Whether it matched policy. Whether it was part of an approved workflow. Which interface initiated it. What target it affected. What other events surrounded it. Whether the action can be replayed, reviewed, or challenged later without guessing.

This distinction matters because operational review is rarely just about whether something technically happened. It is about whether it happened correctly, legitimately, and within bounds. Ordinary logs are good at emitting fragments. They are less good at preserving intent, authority, and consequence as a single accountable thread. That is especially true in environments where automated tools, scripts, service accounts, and human operators all contribute to the same operational surface.

SOTS treats action history as a first-class operational concern rather than a side effect. It preserves the decision path, the policy path, the execution path, and the resulting trace in one place. That makes review faster, audit cleaner, and incident reconstruction less dependent on institutional memory and heroics.

What SOTS Actually Is

SOTS is a self-hosted operational trace and controlled execution system designed for satellite and ground operations environments. It provides a formal record of actions initiated by operators, automation, scripts, services, and agentic systems. It can govern execution directly, record approvals and policy decisions, and write an append-only trace that preserves both the action and the context around it.

At its core, SOTS is concerned with five things: observation, control, approval, execution, and trace. Observation means the system can see actions and related events entering its boundary. Control means it can govern whether a requested action is permitted. Approval means it can record the authority under which an action proceeds. Execution means the action can be carried out through controlled interfaces rather than by informal side paths. Trace means the entire chain can be recorded in a tamper-evident history that remains understandable later.

That combination is what makes SOTS more than a log collector and less than a replacement mission platform. It is not trying to own the whole stack. It is trying to make the stack more attributable and less ambiguous.

What SOTS Records

SOTS is designed to record the details that matter when operational history has to hold up under scrutiny. That includes identity, source, authority, interface, target, timing, policy outcome, execution state, and surrounding evidence. In practical terms, that means SOTS can preserve who initiated an action, whether the initiator was a named operator, a service process, an automation pipeline, a script, or a policy-bound agentic system. It records how the request entered the system, what capabilities were in scope, what policy checks were applied, which target or subsystem was involved, and what execution result followed.

It can also preserve correlation information needed to tie related actions together across a sequence of events. That matters because complex operational changes are rarely one command and one response. They are chains. A change request may trigger validation, approval, execution, verification, rollback, or follow-on actions. Without correlation, those events scatter into unrelated noise. With correlation, they become an operational story that can actually be read.

SOTS is not interested in collecting everything merely because it exists. It is interested in preserving the parts of operational history that make the rest make sense.

Controlled Execution Instead Of Informal Action Paths

One of the biggest sources of trouble in mature environments is the growth of informal action paths. A command can be executed through an approved interface, but it can also be executed through a script, a backdoor tool, a convenience wrapper, or a service account that everyone swears is temporary until it survives for nine years. These paths accumulate because they are useful in the moment. Later, they become risk. Nobody is entirely sure which actions are fully governed, which are merely logged, and which bypass both.

SOTS addresses that by providing a controlled execution layer. Rather than treating execution as something that happens elsewhere and may or may not be traceable later, SOTS can sit in front of sensitive actions and enforce capability scope, authority, and policy checks before execution proceeds. This does not eliminate the need for surrounding operational controls. It does make the action path easier to govern and much easier to explain afterward.

That matters in routine work and it matters even more during anomalies, emergency actions, and periods of operational stress. When people are moving quickly, the value of a controlled path increases, not decreases. Speed without traceability is just fast confusion.

Approval, Authority, And Policy

Operational environments often know how to record events without cleanly recording why those events were allowed. That is a serious blind spot. An action can be technically valid and still procedurally wrong. It can come from an authorized account without matching the authority intended for that moment. It can pass through systems that recognize the credential but not the context.

SOTS is designed to preserve the authority context around actions. That means recording what capability or permission was in scope, what policy checks were applied, whether approval was required, whether approval was granted, and under what boundary the execution proceeded. The point is not to create bureaucracy for its own sake. The point is to preserve a usable answer to the question of why the system allowed the action to happen.

That answer becomes especially important after anomalies, disputed actions, procedural review, or external oversight. An organization does not want to explain that a command was possible because a service account technically had access and nobody noticed the mismatch for three years. It wants a more disciplined answer than that. SOTS helps provide one.

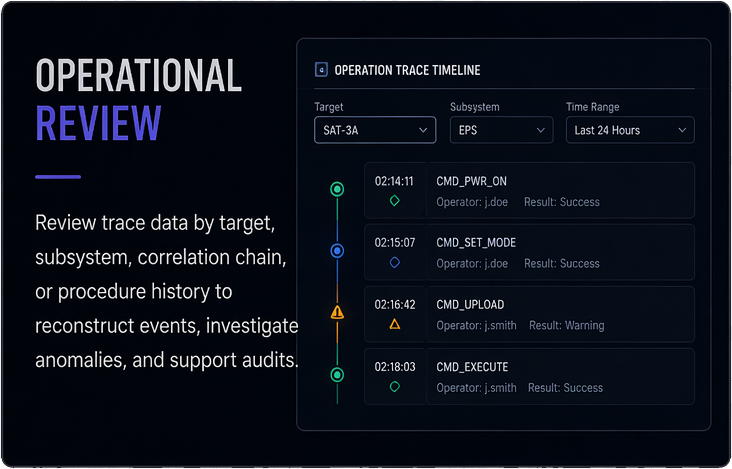

Replayable Operational History

One of the most valuable characteristics of a serious trace system is replayability. Review should not depend entirely on human recollection or on scavenging fragments from adjacent tools. It should be possible to reconstruct the sequence of operational events in a way that preserves the original context. That does not mean replaying a satellite mission inside a cartoon simulator. It means replaying the action history in a way that allows reviewers to see sequence, cause, boundary, policy outcome, and result.

SOTS preserves action and trace data in a way that supports this kind of reconstruction. Teams can review what happened, in what order, through which path, with which authority checks, and with what observed outcomes. That makes anomaly review more disciplined, incident analysis faster, and program-level learning less dependent on rumor. It also supports test and validation work by making it easier to compare intended action patterns with actual execution history.

Replay is not theater. It is one of the only ways organizations can consistently learn from operational history without reinventing the same avoidable confusion each time.

How SOTS Fits Into Existing Environments

SOTS is designed to fit alongside existing operational systems rather than pretending to replace them. It does not need to become the mission system. It does not need to replace telemetry pipelines, planning tools, flight systems, or every existing operational interface. In most cases, its value comes from being the trace and control spine around actions that already need stronger governance and clearer history.

That means SOTS can be introduced incrementally. An organization can start by placing it in front of a narrow class of sensitive actions or a defined operational boundary. It can then expand coverage based on where ambiguity, risk, or review burden is highest. This matters because real environments do not tolerate reckless replacement programs. They tolerate improvements that solve a real problem while respecting what is already there.

That incremental posture is a strength. It lets teams deploy discipline where they need it most, prove value quickly, and extend the system deliberately instead of through grand promises and later regret.

Air-Gapped And Restricted Environments

SOTS is intended for environments where control over deployment matters. It is designed to be self-hosted, low-dependency, and suitable for restricted or disconnected networks where cloud relay, external telemetry, or externally dependent architectures are inappropriate. This is not a lifestyle preference. It is an operational requirement in many serious environments.

Systems that govern sensitive actions should not themselves introduce fragile external dependencies. They should be understandable, locally operated, and capable of running inside the organization’s chosen boundaries. SOTS is built with that in mind. The goal is not to turn a controlled operational trace system into another platform that requires a constellation of external services just to remain upright.

That design posture also improves trust. Teams are far more likely to rely on a system when they understand its boundaries, where the data lives, and how it behaves under constrained conditions.

Where SOTS Helps The Most

SOTS is most valuable in environments where actions matter, where ambiguity is expensive, and where post-event explanation cannot rely on guesswork. That includes operational command paths, procedural actions that require approval, automation pipelines acting on sensitive systems, maintenance or configuration changes in tightly governed environments, and any mixed environment where people and machines share the same action surface.

It is especially useful after the moment nobody wants to discuss beforehand. An anomaly appears. A system behaves differently than expected. A target enters an unexpected state. A review board wants to understand sequence and authority, not excuses. At that point, the difference between scattered logs and a coherent action trace becomes painfully clear. SOTS exists so the organization has something better than fragments.

It also helps before those moments by improving routine discipline. People behave differently when operational actions have a clear governed path and a durable trace. Systems become easier to reason about. Change becomes easier to review. Questions become easier to answer without assembling a search party.

What SOTS Is Not

SOTS is not a replacement for an end-to-end satellite control platform. It is not a telemetry system. It is not a flight dynamics engine. It is not a mission planner. It is not a magical answer to every integration, cybersecurity, test, and program management problem that can affect a large operational environment. Any product that claims all of that deserves a long suspicious pause.

What SOTS does provide is narrower and therefore more believable. It provides a controlled, reviewable, tamper-evident operational trace for actions and related execution history. It helps organizations reduce ambiguity, strengthen accountability, and make operational history easier to defend. That boundary is not a weakness. It is the reason the system can remain understandable and deployable.

Good software earns trust partly by knowing what job it is actually there to do. SOTS is built with that in mind.

Why The Boundary Matters

Operational software becomes dangerous when it tries to solve too many unrelated problems at once. A system intended to improve traceability can easily turn into a bloated “platform” that absorbs unrelated workflow, reporting, orchestration, and management ambitions until it becomes another source of risk. That pattern is common, and it usually arrives wearing the language of convenience.

SOTS is intentionally narrower. It focuses on attributable action execution and operational traceability. That gives it a clean role in the environment and helps keep the architecture disciplined. It also makes buying, deploying, reviewing, and explaining the system easier. Serious buyers do not need another sprawling abstraction layer. They need something obvious, useful, and defensible.

In other words, the system is more valuable because it does not try to become the moon and the weather at the same time.

Operational Review Without Archaeology

One of the hidden costs in complex environments is the amount of time spent reconstructing events that should have been obvious. Engineers compare logs from different systems. Operators try to remember sequence under pressure. Reviewers ask for screenshots, message fragments, service traces, and approval records that were never designed to live together. Every hour spent rebuilding the past from partial evidence is an hour not spent fixing the actual problem.

SOTS reduces that tax. By preserving action history, authority context, execution path, and related outcomes in a single operational trace, it shortens the path between event and understanding. That does not merely save time. It improves the quality of decisions made during response, review, and prevention work.

Organizations with serious responsibilities should not be forced to perform archaeology every time something important happens. They should have a system that remembers what they did.

Why Self-Hosted Still Matters

There is a modern habit of treating software value as something inseparable from subscription gravity, external dependency, and remote dashboards. That posture makes sense for many categories of software. It makes much less sense for systems involved in sensitive operational boundaries. If the software governs actions or preserves critical trace history, control over its deployment matters.

SOTS is built for operators who still want that control. Self-hosted deployment, understandable boundaries, and minimal dependency sprawl are not retro preferences. They are practical design decisions for environments where trust is earned through predictability. The less magic a serious system requires in order to exist, the easier it is to justify, operate, and defend.

That approach also keeps the software aligned with the people using it. The system belongs in their environment, under their control, behaving in ways they can explain without summoning a vendor séance.

The Case For SOTS

Satellite and ground operations environments do not need more decorative abstraction. They need fewer mysteries. They need a cleaner account of who did what, under what authority, through which path, and with what result. They need operational history that can survive scrutiny. They need a way to govern action without pretending the surrounding environment is simple. They need a trace system that can stand next to real operations without becoming another sprawling source of ambiguity.

SOTS is built to do exactly that. It provides controlled execution, attributable action history, policy-aware governance, and replayable operational traceability for environments where those things are too important to leave scattered. It does not promise to solve everything. It promises to make one important class of problems much easier to see, govern, and explain. In serious systems, that is not a small promise. It is one of the few promises worth making.

Resources

No Bloat. No Spyware. No Nonsense.

Modern software has become surveillance dressed as convenience. Every click tracked, every behavior analyzed, every action monetized. M Media software doesn't play that game.

Our apps don't phone home, don't collect telemetry, and don't require accounts for features that should work offline. No analytics dashboards measuring your "engagement." No A/B tests optimizing how long you stay trapped in the interface.

We build tools, not attention traps.

The code does what it says on the tin — nothing more, nothing less. No hidden services running in the background. No dependencies on third-party APIs that might disappear tomorrow. No frameworks that require 500MB of node_modules to display a button.

- • Payment fails? App stops

- • Need online activation

- • Forced updates

- • Data held hostage

- • Buy once, own forever

- • Works offline

- • Optional updates

- • You control your data

Simple Licensing. No Games.

We don't believe in dark patterns, forced subscriptions, or holding your data hostage. M Media software products use clear, upfront licensing with no hidden traps.

You buy the software. You run it. You control your systems.

Licenses are designed to work offline, survive reinstalls, and respect long-term use. Updates are optional, not mandatory. Your tools don't suddenly stop working because a payment failed or a server somewhere changed hands.